The wrong multi-tenant saas architecture costs $100K and 6 months to fix. Three proven patterns dominate B2B saas in 2026: pooled (shared database, row-level security), silo (schema-per-tenant or database-per-tenant), and bridge (hybrid). Each pattern carries different costs across compliance, scaling, operational complexity, and migration risk. The wrong choice locks the saas into a multi-month rewrite when an enterprise customer asks for data residency, when HIPAA enters the sales pipeline, or when a single noisy tenant brings down the whole platform.

This guide answers the question of which multi-tenant saas architecture pattern to choose, with code examples for Postgres row-level security, a 5-question decision tree, real architectural patterns from production saas, and the migration paths between models. It also covers data residency under GDPR, HIPAA isolation requirements, noisy-neighbor throttling, tenant identification strategies, and the most common security pitfalls in multi-tenant saas architecture.

Five takeaways before reading on: the three patterns and where each wins, the Tenancy Decision Tree framework, the Postgres RLS code that actually ships, the 5 multi-tenant saas architecture breach patterns to avoid, and the migration cost from one model to another. For the broader build framework that places this architectural decision in context, see how to build a saas in 2026.

What Multi-Tenant Saas Architecture Actually Means

Multi-tenant saas architecture is the architectural property that lets one application instance serve many customers (tenants) safely, with strict data isolation between them. It is the reason a saas can charge $99 per month and still be profitable: the marginal cost of an additional tenant approaches zero when the architecture is right.

The “actually” in this section header matters because founders new to multi-tenancy frequently confuse three different concepts. First, multi-tenancy is not the same as multi-user. A single-tenant deployment with multiple users is just an account; a multi-tenant saas serves multiple separate organizations from one application. Second, multi-tenancy is not just “we have a tenant_id column.” A correct multi-tenant saas architecture enforces isolation at the data layer, not just the application layer, so that a bug in application code cannot leak tenant data. Third, multi-tenancy is not optional for saas pricing economics: per-tenant infrastructure costs that scale linearly with customer count make low-tier pricing unprofitable.

The dominant 2026 pattern: a single application instance, a single database (or small number of databases), data tagged by tenant_id, and isolation enforced through Postgres row-level security or equivalent middleware. This is what most fixed-price MVP packages assume by default. Variations exist for compliance-heavy verticals (HIPAA, financial services) and for tenants with strong data-residency requirements, but the default pattern is shared.

The reason multi-tenant saas architecture decisions deserve their own article: the wrong pattern costs $100K and 6 months to fix, and most founders make the choice in 30 minutes during stack planning without understanding the implications.

The Three Multi-Tenant Saas Architecture Patterns: Pooled, Bridge, Silo

The three multi-tenant saas architecture patterns are pooled, bridge, and silo. Each represents a point on the isolation-vs-operational-complexity curve.

Pooled (shared database, shared schema). All tenants share one database. Every business-data table has a tenant_id column. Isolation is enforced through row-level security or middleware. Lowest operational complexity, lowest per-tenant cost, easiest to backup and migrate. The default for 2026 B2B saas at MVP and seed stage. Trade-off: weakest isolation, highest noisy-neighbor risk, hardest to satisfy strict data-residency requirements.

Bridge (shared database, separate schema per tenant). All tenants share one database, but each gets its own Postgres schema. Stronger logical isolation than pooled. Schema migrations apply per tenant, which adds operational complexity. Right when mid-market customers want stronger boundaries but the saas is not ready for full silo. Trade-off: schema migration ergonomics get harder as tenant count grows past roughly 50.

Silo (separate database or instance per tenant). Each tenant has a dedicated database or even dedicated infrastructure. Strongest isolation, supports any data-residency or regulatory requirement, eliminates noisy-neighbor risk completely. Trade-off: highest operational complexity (every tenant is its own deployment), highest per-tenant cost, and the marginal-cost-approaches-zero benefit of saas economics goes away.

The architectural reference for all three patterns is the AWS Well-Architected SaaS Lens, which covers tenant isolation, billing, identity, and compliance patterns at depth.

The right pattern is rarely “one for all tenants.” The most common production multi-tenant saas architecture in 2026 is a hybrid: pooled for the standard tier, bridge or silo for enterprise tier customers who pay for it. The decision tree below routes the saas to the right starting pattern.

Pooled Multi-Tenant Saas Architecture: Row-Level Security in Postgres

The pooled multi-tenant saas architecture pattern relies on Postgres row-level security (RLS) to enforce tenant isolation at the database layer. RLS is the difference between “we hope the application code filters by tenant_id” and “the database refuses to return rows from the wrong tenant.”

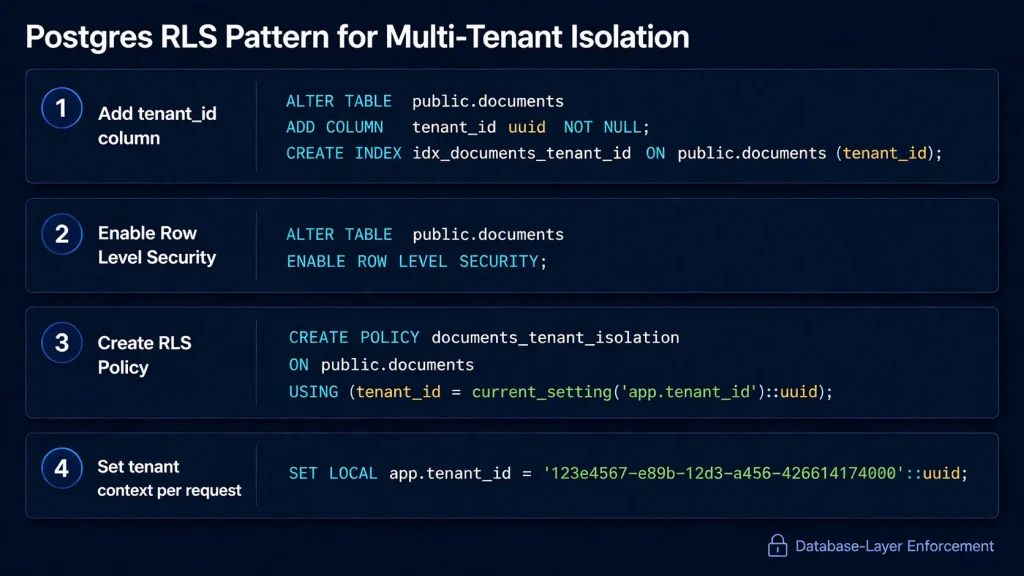

The minimum pooled implementation in Postgres:

sql

-- 1. Add tenant_id to every business-data table

ALTER TABLE projects ADD COLUMN tenant_id UUID NOT NULL;

CREATE INDEX idx_projects_tenant ON projects(tenant_id);

-- 2. Enable row-level security on the table

ALTER TABLE projects ENABLE ROW LEVEL SECURITY;

-- 3. Create the RLS policy

CREATE POLICY tenant_isolation ON projects

USING (tenant_id = current_setting('app.current_tenant')::uuid);

-- 4. In application code, set the tenant context per request

SET LOCAL app.current_tenant = 'tenant-uuid-here';The application middleware (Express, Hono, Laravel) sets app.current_tenant at the start of every request based on the authenticated user’s tenant. From that point on, every query against projects automatically filters to the correct tenant. A bug in application code cannot leak data from one tenant to another because the database itself enforces the isolation.

Three patterns extend this baseline. Bypass for admins. A separate database role (e.g., admin_role) bypasses RLS for support and analytics queries; the application’s request-handling role does not. Multi-tenant queries. Aggregation queries that span tenants (e.g., “total active users across all tenants for billing”) use the admin role explicitly. Migration safety. Every new business-data table needs RLS enabled before it goes to production; a missing RLS policy is the most common multi-tenant data leak in the wild.

The performance cost of RLS is negligible at MVP and seed scale. At 1M+ rows per tenant on dozens of tables, query planner hints become important, and a few queries may need explicit tenant_id filters in addition to RLS for index usage. This is solvable, not architectural.

The canonical reference for Postgres RLS is the Postgres Row Security Policies documentation, which covers the policy syntax, role hierarchy, and bypass mechanics in depth. For the broader saas tech stack context that places RLS in the data layer, see the 2026 saas tech stack.

The pooled multi-tenant saas architecture with RLS is the right default for roughly 80 percent of B2B saas in 2026. Founders who deviate without a specific compliance or scale reason almost always over-engineer.

Silo Multi-Tenant Saas Architecture: Schema-per-Tenant Trade-Offs

The silo multi-tenant saas architecture pattern (one schema per tenant or one database per tenant) provides the strongest isolation but bites back operationally as tenant count grows.

The pattern looks elegant on paper. Every tenant has its own Postgres schema, complete with its own tables. There is no risk of cross-tenant data leakage because the data is physically separated. Migrations look simple: just create the same tables in every schema.

The bite-back happens around tenant 50 to 100. Schema migrations have to apply per tenant, which means the migration tooling needs to iterate through every tenant and apply changes individually. A migration that takes 30 seconds against one schema takes 30 seconds × N schemas, with no parallelization unless built explicitly. Failures during migration leave some tenants on the new schema and some on the old, which is operationally painful to recover.

Backups also multiply. A pooled architecture has one database backup; a silo architecture has N. Restore-to-point-in-time procedures get complicated. The “restore tenant X to yesterday” operation that pooled architectures handle through SELECT queries becomes a database-level operation in silo.

Schema-per-tenant is the right answer in three scenarios. First, when contract terms with enterprise customers explicitly require physically separate data, regardless of cost. Second, when individual tenants have data volumes large enough that a shared database becomes the bottleneck (rare, but real). Third, when regulatory requirements (specific HIPAA implementations, financial services, government contracts) mandate isolation at the storage layer. Outside these three scenarios, schema-per-tenant is over-engineering that costs months of operational pain. The Microsoft multi-tenant SaaS architecture patterns guide has good documentation on when each pattern fits, including signal patterns for when silo becomes necessary.

The Stack Fitness Score for silo: 5 of 5 on isolation strength, 2 of 5 on operational complexity, 2 of 5 on per-tenant cost economics. Choose deliberately.

Bridge Multi-Tenant Saas Architecture: The Best of Both Worlds

The bridge multi-tenant saas architecture pattern occupies the middle ground: a shared database with separate Postgres schemas per tenant, sharing infrastructure but providing stronger logical isolation than pooled.

The pattern works well at small-to-mid scale. Each tenant gets a dedicated schema with its own tables. Schema migrations are still per-tenant but operationally simpler than full silo because the database connection pool is shared. Cross-tenant queries (when needed for billing, analytics, or admin operations) work through the search_path or explicit schema references.

Bridge becomes the right choice in three scenarios. First, when contract terms require logical isolation but not physical separation. Second, when a few tenants are significantly larger than others and would benefit from dedicated indexes and statistics. Third, as the migration target when a saas outgrows pooled but is not ready for full silo.

The hidden cost of bridge: tooling and library support is weaker than pooled. Most ORMs (Prisma, Eloquent, ActiveRecord) assume single-schema operation by default. Bridge requires either tenant-aware connection management, dynamic schema setting per request, or migration to a lower-level query builder. The setup is solvable, not trivial.

Migration complexity also stacks. Bridge architectures still need per-tenant migrations, just like silo. The savings come from shared infrastructure (one Postgres instance, one connection pool, one backup target), not from simpler migration mechanics.

The Stack Fitness Score for bridge: 4 of 5 on isolation strength, 3 of 5 on operational complexity, 4 of 5 on per-tenant economics. Bridge is rarely the starting pattern; it is usually the migration destination from pooled when a few tenants outgrow shared schema constraints.

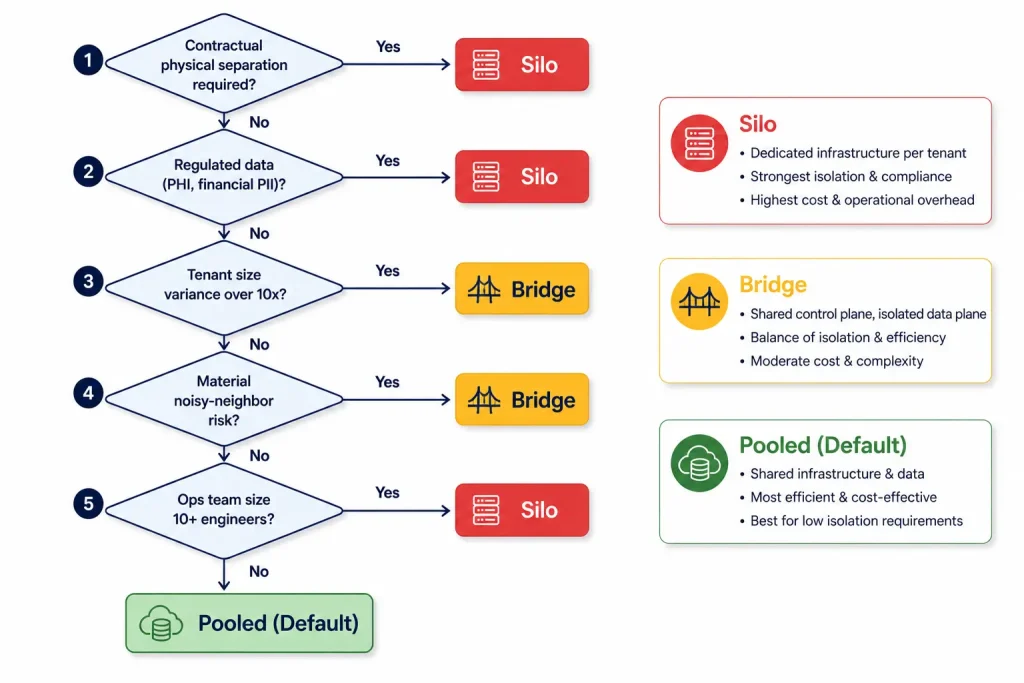

The Multi-Tenant Saas Architecture Decision Tree

The Tenancy Decision Tree is the framework for picking the right multi-tenant saas architecture pattern. Five yes/no questions route the saas to pooled, bridge, or silo.

Question 1: Do any tenants require contractually separate physical data storage? If yes (regulated industries, government contracts, certain enterprise terms), silo is required at minimum for those tenants. If no, continue to question 2.

Question 2: Does the saas process regulated data (PHI, financial PII, EU personal data with strict residency)? If PHI is involved, silo is strongly recommended for the regulated tenants because HIPAA’s auditability requirements are easier in dedicated databases. If GDPR with strict EU residency, bridge or silo with EU-region deployment. If unregulated, continue.

Question 3: Do any tenants have data volumes 10x or more above the median tenant? If yes, those tenants will benefit from dedicated indexes and statistics. Bridge or silo for the outliers. If no, continue.

Question 4: Is the noisy-neighbor risk material? Some tenants generate query loads or write volumes that affect other tenants on shared infrastructure. If a single tenant could realistically dominate the database, bridge or silo for that tenant. If load is well-distributed, continue.

Question 5: Is the operations team large enough to maintain per-tenant migrations and backups? Schema-per-tenant and database-per-tenant patterns require disciplined ops practices. If the team is two engineers, pooled is the right default. If the team is ten engineers including dedicated infrastructure capacity, bridge or silo are operationally feasible.

The default routing for most B2B saas in 2026: question 1 is no, question 2 is GDPR-only (handled by region selection, not pattern), questions 3 and 4 are no at MVP scale, question 5 is “two engineers” which routes to pooled. Most saas should start pooled and migrate selectively to bridge for outlier tenants if and when those tenants emerge.

The decision tree is included as a downloadable template at the end of this article, alongside the Postgres RLS code samples and the migration playbooks for each pattern transition.

Data Residency in Multi-Tenant Saas Architecture: GDPR Compliance

Data residency is the requirement that customer data stays in specific geographic regions. GDPR makes this a real concern for any saas with EU customers. The multi-tenant saas architecture decision interacts with residency in three ways.

Pooled with single-region deployment. All tenant data lives in one region. This is the simplest setup but fails GDPR for any EU customer if the region is outside the EU. Acceptable for US-only saas or until the first EU customer arrives.

Pooled with multi-region deployment. The application runs in multiple regions, with tenants routed to the region matching their data-residency requirement. EU tenants land on EU-region database; US tenants on US-region database. This is the dominant 2026 pattern for B2B saas selling cross-border. Implementation requires region-aware tenant routing (typically via a tenant_region field on the tenants table) and replicated identity infrastructure.

Silo with per-tenant region. Each tenant’s database lives in its specifically requested region. Strongest residency posture but operationally heaviest. Required for some financial services and healthcare customers; rarely needed for general B2B saas.

The hidden architectural cost of residency: cross-region operations (analytics queries, billing rollups, admin tools) become harder. A single SQL query cannot span EU and US databases without data-transfer compliance review. Most saas in 2026 build a separate analytics layer (often a Snowflake or BigQuery warehouse with region-aware ingestion) to handle cross-region aggregation while keeping production data residency-compliant.

The pragmatic guidance for early-stage saas: design the multi-tenant saas architecture to support multi-region deployment from day one (region field on tenants table, region-aware identity middleware, region-aware deployment pipeline) but defer actually deploying multiple regions until the first paying EU customer requires it. This keeps options open without paying the operational cost prematurely.

HIPAA in Multi-Tenant Saas Architecture: When Silo Is Required

HIPAA compliance interacts with multi-tenant saas architecture in ways that surprise founders entering healthcare. The framework permits multi-tenancy but requires specific architectural controls that most pooled patterns satisfy partially and most silo patterns satisfy fully.

The HIPAA technical requirements that matter for multi-tenancy: encryption at rest and in transit (any pattern can satisfy this), access controls per role and per tenant (RLS handles this), audit logs of every PHI access (any pattern can satisfy this), and data segregation that prevents cross-tenant access in the event of a security incident (this is where silo wins).

Pooled multi-tenancy with strong RLS is HIPAA-compatible for many implementations. The covered entity (the customer) signs a Business Associate Agreement (BAA) with the saas, which obligates the saas to specific controls. The BAA’s data segregation requirements can be satisfied through RLS, encryption, and audit logging without physical isolation. AWS RDS, Aurora, and most managed Postgres providers support this configuration.

Silo is required when contract terms with healthcare customers explicitly demand physically separate databases. This is more common in hospital systems, government healthcare programs, and some health insurance customers. The driver is rarely HIPAA itself; it is the customer’s procurement team writing stricter requirements than HIPAA actually mandates.

The practical pattern for healthcare-adjacent saas: start pooled with strict RLS and audit logging, signed BAAs with customers, encryption keys managed in AWS KMS or equivalent. Migrate specific tenants to silo only when contract terms demand it and the deal economics justify the operational overhead. Most healthcare saas in 2026 ship pooled architectures and migrate to silo for two or three named enterprise customers, not the whole tenant base.

Noisy Neighbors in Multi-Tenant Saas Architecture: Throttling and Limits

A noisy neighbor in a multi-tenant saas architecture is a tenant whose load patterns affect other tenants on shared infrastructure. The noisy neighbor problem is the single largest hidden cost of pooled architecture.

The four practical noisy neighbor controls:

Connection pool limits per tenant. No single tenant should be able to monopolize the database connection pool. Connection-per-tenant limits in pgbouncer or the application middleware cap the maximum concurrent connections any one tenant can hold. Default cap: 10 connections per tenant on a pool of 100.

Rate limiting per tenant. API requests per tenant per minute are throttled at the application layer, with limits varying by subscription tier. Free tier might allow 60 requests per minute; enterprise tier might allow 6,000. Redis is the standard implementation substrate.

Query timeout per tenant. Postgres statement_timeout set per role or per session ensures no single tenant query can run for more than N seconds. Default: 30 seconds for transactional queries, 5 minutes for explicitly opted-in analytics queries.

Resource isolation via PgBouncer pools or read replicas. Larger tenants can be routed to dedicated connection pools or even dedicated read replicas, providing logical separation without full schema-per-tenant complexity.

Without these controls, a single tenant’s expensive query, runaway loop, or sudden traffic spike degrades performance for every other tenant on the platform. The cost of installing the controls is roughly 2 to 4 days of engineering work at MVP stage. The cost of installing them after the first customer-impacting incident is the same, plus the customer-trust damage.

Tenant Identification in Multi-Tenant Saas Architecture

Tenant identification is the question of how the application knows which tenant a request belongs to. Four patterns dominate multi-tenant saas architecture in 2026.

Subdomain-based. Each tenant gets a subdomain like acme.mysaas.com. The reverse proxy or application middleware extracts the subdomain and resolves it to a tenant_id. Strongest user-facing pattern, supports vanity URLs, requires DNS wildcard configuration. Best for B2B saas where tenant identity is a brand surface.

Path-based. Each tenant lives at mysaas.com/acme/. Simpler infrastructure than subdomain but feels less professional and complicates SEO. Right for internal tools or saas where tenant identity is not a customer-facing concern.

Header-based. API requests carry an X-Tenant-ID header. Used internally for service-to-service communication or in mobile API contexts where subdomain routing is awkward.

JWT claim-based. The authenticated user’s JWT contains a tenant claim. Middleware extracts the claim and sets the tenant context. Strongest pattern when the user has a single tenant, weaker when users belong to multiple tenants and need to switch.

Most B2B saas in 2026 combine two patterns: subdomain for branded tenant access and JWT claim for the actual tenant context inside the application. The subdomain establishes which tenant is being accessed; the JWT proves the user has access to that tenant.

The hidden gotcha: tenant identification needs to be enforced at the edge, before any business logic runs. A request that gets to a controller without resolved tenant context is a security incident waiting to happen. Middleware ordering in Express, Hono, and Laravel matters; tenant resolution should happen immediately after authentication and before any request handler executes.

Migration Paths Between Multi-Tenant Saas Architecture Patterns

Migrating between multi-tenant saas architecture patterns is meaningful work, but is rarely the catastrophe founders fear. The three common migration paths and their realistic costs:

Pooled to bridge. Transferring select tenants from shared schema to dedicated schemas. Process: create a per-tenant schema for the migrating tenants, copy data, switch routing for those tenants, validate, decommission old data. Estimated time: 1 to 2 weeks per migrated tenant for the engineering work, though scriptable so the per-tenant marginal cost decreases. Most common driver: a single large customer asks for stronger isolation as a contract term.

Pooled to silo (selective). Moving specific tenants to dedicated databases. Process: provision new database per migrating tenant, dump and restore data, switch routing, validate, decommission. Estimated time: 2 to 3 weeks per tenant for the first migration, 3 to 5 days per tenant after the tooling is built.

Silo to pooled. Consolidating dedicated databases back into shared infrastructure. Process: design the shared schema, migrate data with tenant_id tagging, switch routing, validate. Most painful migration because backups and restore procedures change fundamentally. Estimated time: 4 to 12 weeks total. Rarely done; the usual driver is hitting an operational wall with too many silos.

The migration cost guidance: pick a reasonable starting pattern (pooled for most B2B saas), build the tenant-aware infrastructure to make later migration possible (region field, migration scripts, dump-restore tooling), and accept that 1 in 20 tenants might eventually migrate to bridge or silo. The incremental cost of supporting that flexibility from day one is roughly 5 percent of build time. The cost of being unable to migrate when an enterprise customer demands it is the lost contract.

5 Multi-Tenant Saas Architecture Breach Patterns

Multi-tenant data breaches follow predictable patterns. The five most common, with anonymized examples drawn from public post-mortems and industry reports:

Pattern 1: Missing RLS policy on a new table. A B2B HR saas in 2023 leaked tenant data through a feature table created without RLS enabled. Application code filtered correctly in most code paths but missed one admin endpoint. Fix: automated CI check that fails the build if any new table lacks an RLS policy.

Pattern 2: Authorization check at the wrong layer. A B2B project management saas leaked tenant data when application code performed authorization checks before tenant resolution. Users authenticated as tenant A could request resources from tenant B by tampering with URL parameters. Fix: enforce tenant resolution at the middleware layer, not the controller.

Pattern 3: Tenant_id not propagating through async work. A B2B financial saas leaked data through a background job system that lost tenant context when jobs were dequeued. Reports generated in the background pulled rows from random tenants. Fix: serialize tenant_id with every job payload and re-establish RLS context on dequeue.

Pattern 4: Cache key collisions. A B2B analytics saas leaked tenant dashboards through Redis cache keys that did not include tenant_id. User from tenant A would see tenant B’s dashboard from cache. Fix: prefix every cache key with tenant_id, audit existing keys.

Pattern 5: Search index without tenant filtering. A B2B knowledge-base saas leaked documents through Elasticsearch queries that lacked tenant filtering at the index level. Users could search across all tenants by crafting specific queries. Fix: per-tenant Elasticsearch indices or strict tenant filtering on every query.

The thread across all five patterns: the breach happens at a layer the team did not consider, not at the layer they secured carefully. Defense-in-depth (RLS, application checks, middleware, audit logging) catches errors that any single layer misses.

Observability for Multi-Tenant Saas Architecture

Observability for multi-tenant saas architecture means every metric, log, and trace is tagged with tenant_id, so the team can answer “which tenant is causing this?” within seconds.

The day-one observability requirements:

Per-tenant metrics. Request rates, error rates, query times, cache hit rates, all broken down by tenant. Datadog, Honeycomb, and Sentry support tenant-tagged metrics natively if the tag is included in instrumentation calls.

Per-tenant cost allocation. AI inference costs, storage costs, bandwidth costs allocated to specific tenants, so the team can identify negative-margin customers before they become a financial problem. Implementation: a usage_events table that logs every billable operation with tenant_id, aggregated nightly into per-tenant cost summaries.

Per-tenant audit logs. Every data-modifying action attributed to a user and a tenant, retained per regulatory requirements. Critical for HIPAA and SOC 2 compliance, useful for debugging customer-reported issues.

Tenant-aware error tracking. Sentry, Honeybadger, and Bugsnag can group errors by tenant, surfacing patterns where one tenant generates disproportionate error volume. This is how teams identify integration issues with specific customers before those customers churn.

The cost of skipping per-tenant observability and adding it later is roughly 2 weeks of refactor work plus the customer-trust damage from incidents that should have been caught with proper monitoring. For the broader pre-launch operational checklist that places observability in context, see the saas pre-launch checklist.

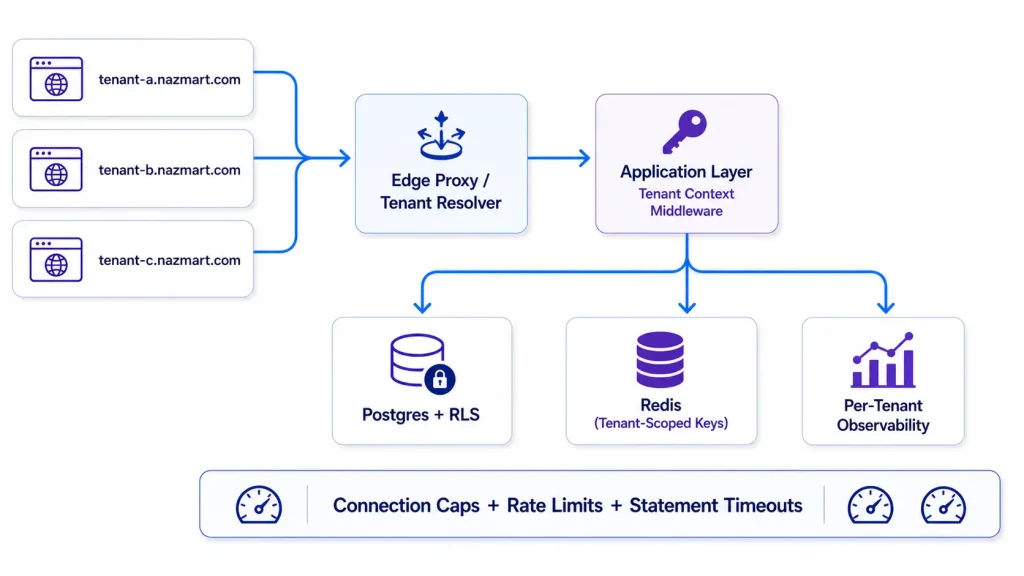

Multi-Tenant Saas Architecture at Scale: Nazmart Case Study

Nazmart, a productized eCommerce saas in the Xgenious portfolio, runs production multi-tenant infrastructure that demonstrates the pooled-with-RLS pattern at scale. The architecture is instructive because it shows what the default 2026 pattern looks like once it is hardened.

Tenancy pattern: pooled with Postgres RLS. Every business-data table carries a tenant_id column with row-level security policies enforcing isolation. Tenants are identified by subdomain ({tenant-name}.nazmart.com) and resolved at the edge proxy before requests reach application code.

Scale numbers. Hundreds of active tenants on shared Postgres infrastructure, with the largest tenant generating roughly 30x the volume of the median tenant. The pooled pattern holds because none of the noisy neighbor thresholds have been crossed: connection pools and rate limits prevent any tenant from monopolizing infrastructure, and the volume distribution skews but does not break the architecture.

Compliance posture. GDPR-compliant with EU-region routing for European tenants, audit logs immutable and queryable per tenant, deletion-on-request workflow that cascades through all tenant tables. SOC 2 readiness in place but not yet pursued because the customer base does not require Type II at this stage.

Operational learnings. Three patterns Nazmart’s architecture teaches that are transferable to any multi-tenant saas architecture. First, RLS plus middleware-level tenant context plus tenant-tagged observability is sufficient for the first 500 to 1,000 tenants without architectural change. Second, the noisy neighbor controls (connection caps, query timeouts, rate limits) deserve to be in place before the first paying customer, because retrofitting them after an incident costs more than installing them upfront. Third, the migration path from pooled to bridge for outlier tenants is solvable at the rate of 1 to 2 tenants per quarter, which has proven sufficient even as enterprise customers with stronger isolation requirements have entered the customer base.

For the productized eCommerce saas itself, see Nazmart. For the broader build framework that produced the architecture, see how to build a saas in 2026.

Conclusion: Multi-Tenant Saas Architecture as a Long-Term Decision

Multi-tenant saas architecture is one of the highest-leverage decisions in the early life of a saas. Three patterns (pooled, bridge, silo) cover the design space. Five questions in the Tenancy Decision Tree route the saas to the right starting pattern. Postgres row-level security enforces the pooled model at the database layer, removing entire categories of application-code bugs from the security surface. The migration paths between patterns are real but solvable when the architecture is designed for flexibility from day one.

The dominant 2026 default for B2B saas is pooled with Postgres RLS, multi-region deployment for GDPR compliance, per-tenant rate limits and connection caps for noisy neighbor protection, and tenant-tagged observability across metrics, logs, and traces. This combination handles the first 500 to 1,000 tenants for most saas without architectural change, and supports selective migration to bridge or silo for the few outlier tenants that demand it.

Multi-Tenant Saas Architecture FAQ

1. Should I start with RLS and migrate to silos later?

Yes, for almost all B2B saas in 2026. The pooled pattern with Postgres RLS handles the first 500 to 1,000 tenants without architectural change. Selective migration to silo for specific enterprise tenants happens at the rate of 1 to 5 percent of the tenant base, well after the saas has revenue to fund the migration work. Starting with silo is over-engineering for the first 2 to 3 years.

2. Does multi-tenancy matter with only 10 customers?

Yes. The architectural cost of designing multi-tenant saas architecture from day one (RLS, tenant_id columns, middleware) is 2 to 4 days. The architectural cost of retrofitting it at customer 50 is 4 to 8 weeks plus a security review. Even with 10 customers, the design-it-in path is cheaper than design-it-later because no migration is ever required.

3. How does tenancy affect HIPAA compliance?

HIPAA permits multi-tenancy with strong technical controls (RLS, encryption, audit logs, signed BAAs). Most pooled architectures with proper controls are HIPAA-compatible. Silo becomes necessary when specific enterprise customer contracts demand physical separation, which is more common in hospital systems and government healthcare than in HIPAA itself.

4. What is the cheapest multi-tenant saas architecture for a bootstrapped saas?

Pooled with Postgres RLS on a managed cloud (Supabase, Neon, AWS RDS). Free tier or sub-$50 per month at MVP scale. The marginal cost of an additional tenant is essentially zero. This is the only pattern compatible with bootstrapped economics; bridge and silo require enough revenue to fund the operational overhead.

5. Should each customer get their own subdomain?

For B2B saas, yes. Subdomain-based tenant identification is the strongest user-facing pattern, supports brand surface, and integrates cleanly with SSO. For prosumer or internal-facing saas, path-based is acceptable. Avoid header-only or JWT-only tenant identification for customer-facing applications because there is no visual cue that the user is in the right tenant.

6. Is Supabase good for multi-tenant saas?

Yes for MVP, with caveats. Supabase provides Postgres with RLS support and managed auth, which is the right baseline for pooled multi-tenant saas architecture. The caveat: Supabase pricing scales with database size, which becomes expensive at moderate tenant counts. Most production saas migrate from Supabase to AWS RDS or Neon at the seed-to-Series-A boundary, while keeping the same RLS-based architecture.

7. How do I test tenant isolation?

Three test patterns. Unit tests that verify RLS policies block cross-tenant queries when the wrong tenant context is set. Integration tests that spin up two tenants, perform actions in tenant A, and assert tenant B cannot see them through any API endpoint. Periodic audit scripts that scan production for queries lacking tenant_id filters or admin role usage outside expected paths. The audit scripts catch drift; the unit and integration tests catch regressions.